Regional banking rarely changes overnight, yet Customers Bank’s decision to wire frontier AI into the entire commercial franchise signaled a deliberate break from incrementalism to system-level redesign that aimed to compress turnaround times, elevate service, and scale personalization without diluting the single point of contact clients value. The Pennsylvania lender, with roughly $26 billion in assets and 25 locations, set a multi‑year agreement with OpenAI to embed models across lending, deposits, and payments, reframing technology as a fabric rather than a feature. Management described the move as a path to “AI‑native” status, not a chatbot facelift. That ambition leaned on groundwork already poured: governance guardrails, operating standards for safe deployment, and reported adoption by 75% of employees using OpenAI‑powered tools. The pitch to customers was pragmatic—faster answers, fewer handoffs, and bankers freed to spend time on complex, high‑touch work.

Inside the Operating Model: From Models to Agents

Customers Bank’s arrangement with OpenAI paired access to frontier models with hands‑on engineering, enabling a co‑developed adoption roadmap that connected experimentation to production. The stated focus stretched beyond digital channels into the “full lifecycle” of commercial banking—document intake for loans, anomaly detection in deposits, and payment exception handling—where unstructured data and judgment calls often slow progress. Building on this foundation, the bank moved to eleven o’clock tasks that matter to clients: quicker credit memos, cleaner KYC refreshes, and automated follow‑ups that closed loops rather than spawning tickets. Human‑in‑the‑loop review and audit trails anchored risk controls, while role‑based access, red‑teaming, and prompt governance contained model sprawl. The intent was to blend speed with accountability, ensuring employees could trace outputs and override them when needed.

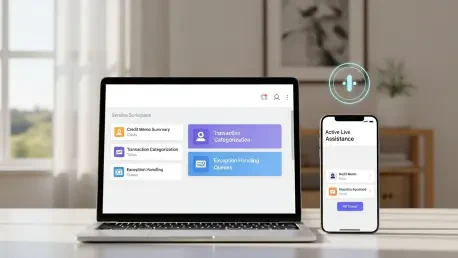

This approach naturally led to agentized experiences. Through a separate agreement with ElevenLabs, the bank planned AI assistants tuned for distinct moments: a coaching companion for call centers that suggested compliant phrasing in real time, an onboarding agent that guided new clients through documentation, and always‑on voice and chat support across phone, web, and mobile. These agents were scoped to handle routine account queries, card services, and transfers, escalating to bankers for exceptions. The design choice mattered. Rather than bolt voice on top of existing menus, the agents tapped context from CRM and core systems to shorten each interaction, with transcripts feeding continuous improvement. In contrast to legacy IVR trees that trapped callers, the bank bet on natural language flows, rigorous guardrails, and clear fallback to humans. If it worked, the frontline would spend more time diagnosing client needs and less time hunting for information.

Competitive Stakes: Can a Regional Bank Lead?

The bank’s early deployment of ChatGPT Enterprise in 2023 positioned it alongside global peers—BBVA, Santander, and Commonwealth Bank of Australia—that already tested enterprise‑grade generative AI at scale. However, regional leadership rests on evidence, not headlines. The first proof points to watch included cycle time for commercial underwriting, digital containment rates in service channels, accuracy of transaction categorization, and compliance exceptions per thousand interactions. Building on this lens, the bank framed AI as a lever for 24/7 service without sacrificing relationship depth. Meanwhile, OpenAI’s broader financial services push, including the acquisition of personal finance startup Hiro, suggested a maturing toolkit that might accelerate productization. The competitive edge, then, hinged on process redesign, not just model access—rewriting workflows so bankers could intervene at the precise moments where judgment and context carried the most weight.

The path forward for banks studying this playbook had been actionable. Start with one end‑to‑end journey—such as onboarding for middle‑market clients—and instrument it for measurable gains in cycle time, error rates, and client satisfaction. Stand up a model risk framework that covered prompt libraries, fine‑tuned variants, and agent behaviors with clear rollback plans. Establish a dual‑track operating cadence: weekly ship cycles for agent improvements and quarterly reviews for underwriting policy changes influenced by AI insights. Fund a service design function to keep experiences coherent across phone, web, and banker‑led channels. Finally, publish internal scorecards so frontline teams saw progress and flagged drift early. If Customers Bank sustained its roadmap, maintained guardrails, and tied outcomes to banker productivity and client value, regional leadership had been within reach—and replicable by peers disciplined enough to build for scale rather than demos.